The Test That Led a Team in the Wrong Direction

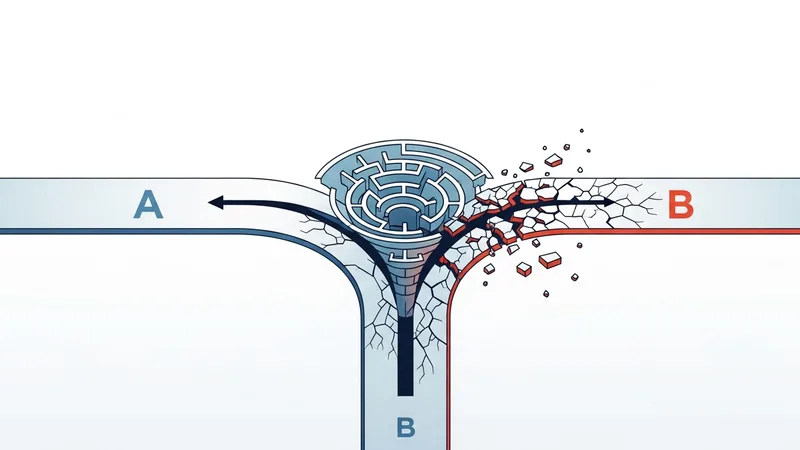

Bad AB test results have sent more marketing teams in the wrong direction than bad creative ever has. And most teams never realize their data was wrong. A fintech startup I advised spent six weeks running an A/B test on their signup flow. Variant A was their existing two-step form. Variant B was a simplified single-step form they hypothesized would reduce friction and increase signups.

After six weeks, the data was clear: Variant B outperformed Variant A by 34%. The team was thrilled. They rolled out Variant B to 100% of traffic, updated their onboarding sequence, and moved on to the next project.

Three weeks later, their actual signup rate was lower than before the test started. Not slightly lower, 18% lower than Variant A's pre-test performance. Something was at its root wrong.

Five Hidden Variables That Corrupt AB Test Results

During week two of the test, the startup's analytics team installed a new heatmap tracking script. The script added 280KB of JavaScript to every page. It loaded synchronously, meaning it blocked page rendering until it finished downloading. This is a ab test results problem that monitoring catches early.

Variant A. The two-step form. Loaded on a separate page from the landing page. The heatmap script loaded twice for Variant A visitors: once on the landing page and once on the form page. Total added load time: about 1.4 seconds across two page loads.

If this resonates, check out our post on countdown Timer on Your Landing Page Might Be Costing You Sales.

Variant B. The single-step form. Kept everything on one page. The heatmap script loaded once. Total added load time: about 0.7 seconds on a single page load.

Variant B did not win because it was a better form design. It won because it was less impacted by a tracking script that artificially slowed down both variants. But slowed down Variant A twice as much. Addressing ab test results issues like this prevents the damage from compounding.

When the team removed the heatmap script after discovering the issue, Variant A's conversion rate recovered. The single-step form did not actually convert better than the two-step form. The test result was an artifact of a page speed discrepancy. We see this pattern frequently. As we explored in our piece on the real cost of a slow landing page, even small speed differences have outsized effects on conversion.

Five Factors That Corrupt A/B Test Data

The heatmap script is one example of a broader category of issues that can invalidate A/B test results. Here are the five most common:

We saw the same pattern play out in social Proof Widgets That Break Your Landing Page and How to Fix Them.

1. Unequal page speed between variants

If one variant loads faster than the other. Whether due to image sizes, script loading, or server-side rendering differences. You are not testing design differences. You are testing speed differences. And speed will almost always be the dominant variable. A reliable ab test results check would have flagged this within minutes.

2. Tracking failures during the test period

If your conversion tracking stops working intermittently during a test, the variant running during the outage period appears to have a lower conversion rate. If the outage affects both variants equally, the test remains valid but underpowered. If it affects them unequally. Which happens when variants load different pages or trigger different events. The results are unreliable.

3. Not enough sample size

Calling a test after 200 visitors per variant is tempting when the numbers look good, but statistically meaningless. For a test to detect a 10% relative improvement with 95% confidence, you typically need 3,000-5,000 visitors per variant, depending on your baseline conversion rate. Most tests are called too early.

4. External events during the test window

A competitor launches a major promotion. A holiday weekend changes traffic patterns. An industry publication mentions your brand. Any external event that shifts visitor behavior during your test period can skew results, especially if the event's impact is not distributed evenly across the test duration. This is why ab test results detection matters for every campaign.

5. Form or checkout failures on one variant

If the form on Variant A breaks for 4 hours on day three of your test while Variant B's form works perfectly, Variant A's data is contaminated. Unless you are monitoring form functionality continuously, you will never know this happened. You will just see a performance gap and attribute it to design.

How to Protect Your Test Integrity

Reliable A/B testing requires more than a testing tool and a hypothesis. It requires environmental stability. Here is how to ensure your test results reflect reality:

- Freeze your page during testing. Do not add or remove scripts, pixels, or page elements while a test is running. Any change introduces a confounding variable.

- Monitor both variants continuously. Check page speed, form functionality, and tracking pixel status on both variants throughout the test. If either variant experiences a technical issue, note the time window and exclude that data from your analysis.

- Wait for statistical significance. Use a sample size calculator before you start. Determine how many visitors you need and do not end the test until you reach that number. Early stopping inflates false positive rates.

- Check for speed parity. Measure load time on both variants before the test starts. If they differ by more than 0.3 seconds, equalize them before testing. Otherwise you are testing speed, not design.

- Segment by device and browser. A variant might perform better on desktop but worse on mobile due to layout differences. Looking only at aggregate numbers can hide these conflicts.

Trust Your Data Only When Your Funnel Is Healthy

A/B testing is one of the most powerful tools in a marketer's arsenal. When the data is clean. But the data is only clean when your funnel is functioning correctly throughout the test period. Before you launch your next test, run a free scan on both variant pages to verify that page speed, tracking, and functionality are equal and stable. The last thing you want is to make a major strategic decision based on data that was corrupted by a technical issue nobody noticed.